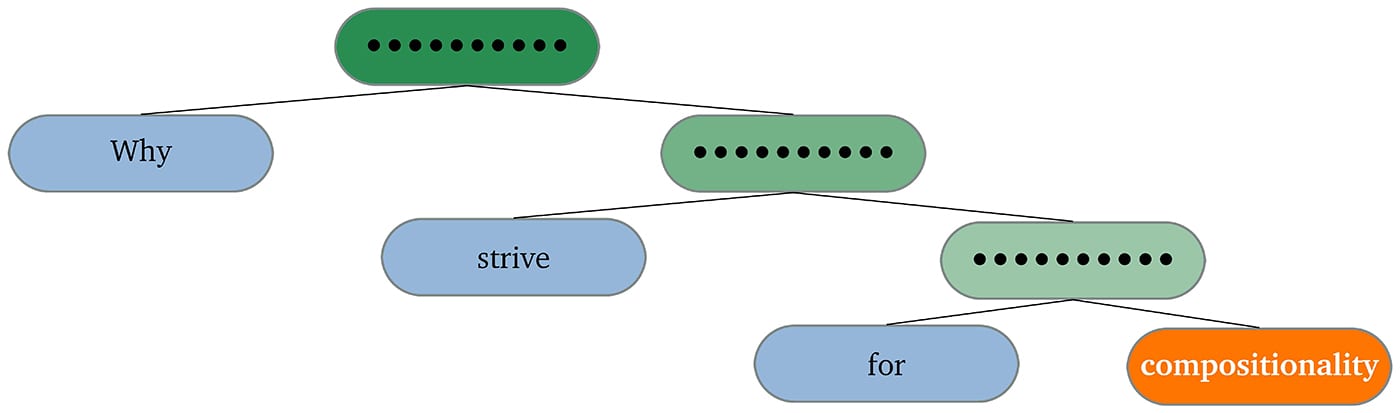

Compositionality or generalization?

Christopher Potts Stanford University

September 30, 2020 · 4:30 pm—6:00 pm · via Zoom

Program in Linguistics

The principle of compositionality says roughly that the meaning of a complex syntactic phrase is determined by the meanings of its constituent parts. When linguists seek compositional analyses of linguistic phenomena, what principles guide their investigations, and what higher-level goals are they actually pursuing? In this talk, I’ll begin by trying to answer these questions, and I’ll use those answers to argue that linguists should seriously consider recent models that combine compositional grammars with machine learning. These models are guided by the principle of compositionality, but they are not strictly speaking compositional, and compositionality is not a primary objective for their proponents. Rather, the objective of these models is to *generalize* — to make accurate predictions about novel cases. This is a broader goal than compositionality, and adopting it can lead to richer theories of language and language use.

Christopher Potts is Professor and Chair of Linguistics and, by courtesy, Professor of Computer Science, at Stanford. In his research, he develops computational models of linguistic reasoning, emotional expression, and dialogue. He is the author of the 2005 book The Logic of Conventional Implicatures (Oxford University Press) as well as numerous scholarly papers in linguistics and natural language processing.

Members of the Princeton University community can register at https://forms.gle/cazyoHezQ5ZLixt67 to receive the link for the event.